WEBINAR ONDEMAND

Improve your training strategy

April 10, 2026

CONTENT CREATED BY:

Table of contents

Training evaluation is the systematic process of measuring whether your training programs are achieving the expected results: more knowledge, better performance and a real impact on business objectives. Without a structured evaluation, any investment in continuous training operates blind. This guide covers what it is, why it matters, the 7 main models, tools, metrics and the concrete steps to implement it in your organization.

Companies invest a large amount of money and effort in the corporate training of their employees, so that they can acquire new skills, update their knowledge and perform their roles more effectively. However, many L&D teams are unable to answer a fundamental question: is this training actually working? Training evaluation answers that question systematically. It is not just about measuring whether participants were satisfied — it is about determining whether training has generated real changes in behavior, skills and business results.

A structured training evaluation process enables you to identify which programs deliver the highest return on investment, where the gaps lie and how to continuously improve your L&D strategy. Without this data, decisions about training are made on intuition rather than evidence.

Having a structured evaluation process transforms the way the L&D team manages training. These are the four main advantages:

Evaluation allows you to identify which competencies or knowledge are not being correctly acquired, even when participants complete the course. Without this data, you cannot know whether the program is actually closing the skills gap it was designed to address.

Evaluation data enables you to prioritize which programs to keep, improve or discontinue, and justifies training investment to senior leadership with objective evidence rather than assumptions.

Programs that are evaluated and improved based on real data have a direct impact on employee performance. According to the LinkedIn Workplace Learning Report 2024, organizations with strong learning cultures have 57% higher retention rates than those without.

Systematic feedback from participants — combined with completion and assessment data — allows course creators to identify which modules work well and which need to be redesigned, updated or replaced.

Training evaluation models provide a solid foundation and a framework for analyzing the effectiveness of training programs. Each one has a specific focus, a level of complexity and a type of organization it best suits. Here are the 7 most widely used:

The most widely adopted framework in corporate training. It evaluates four cascading levels: Reaction (what did participants think?), Learning (what did they acquire?), Behavior (do they apply it on the job?) and Results (what impact did it have on the organization?). Its strength lies in its simplicity and universality — it applies to virtually any type of program. Its main limitation is that levels 3 and 4 require greater resources and time to measure properly.

Extends the Kirkpatrick model by adding a fifth level: Return on Investment (ROI). It proposes converting the level 4 benefits into monetary value and comparing it with the total cost of the program. Ideal for justifying large training investments to senior leadership, although its full implementation requires methodological rigor and resource commitment.

Roger Kaufman’s model extends Kirkpatrick by incorporating a prior level: the evaluation of inputs and processes. It also elevates the results level to include the impact on society and the end customer, not just the organization. More comprehensive but also more demanding to implement.

CIRO stands for Context, Input, Reaction and Outcome. Developed by Warr, Bird and Rackham, it focuses on context analysis before designing the training: what is the real need? Are the available resources appropriate? It is especially useful when the objective is to evaluate whether training was the right solution to the identified problem.

Evaluates the strategic alignment of training: does the training program contribute to the organization’s objectives? It proposes three phases: aligning training with strategy, evaluating the value of training activities and determining the contribution of learning to organizational performance.

Also known as the Success Case Method (SCM), it takes a pragmatic approach: it identifies the participants who have been most and least successful in applying what they learned, and analyzes the causes. Rather than measuring everyone equally, it focuses the evaluation on the extremes to extract actionable insights with minimum effort.

Developed by Stufflebeam, it evaluates four dimensions: Context, Input, Process and Product. It is one of the most comprehensive models and is frequently used in evaluations of complex or long-duration training programs. To help you choose the most appropriate model for your context, here is a comparison of all 7:

| Model | Best for | Complexity |

|---|---|---|

| Kirkpatrick (4 levels) | Any organization; universal starting point | Medium |

| Phillips ROI (5 levels) | High-investment programs; justification to leadership | High |

| Kaufman (5 levels) | Impact on society and end customer | High |

| CIRO | Validating whether training was the right solution | Medium |

| Anderson (3 phases) | Aligning training with corporate strategy | Medium |

| Brinkerhoff SCM | Agile evaluation with limited resources | Low-Medium |

| CIPP | Complex or long-duration programs | High |

Choosing the model is just the first step. To collect the data that model requires, you need to combine different tools depending on the phase of the process and the type of information you are seeking.

Surveys are the most widely used tool for measuring reaction (Kirkpatrick level 1). The most common format is the post-training satisfaction survey, conducted immediately after the training ends. For measuring transfer (level 3), delayed surveys are used — sent 30 to 90 days after training — to assess whether participants are applying what they learned on the job.

Pre and post assessments, knowledge tests and practical simulations measure learning (level 2). Integrated into an e-learning platform, they generate automatic data without requiring manual collection. The key is to design them with direct alignment to the training objectives.

For levels 3 and 4, it is necessary to connect training data with operational performance indicators: productivity, quality, sales, absenteeism or turnover. This requires integration between the LMS and HR or business systems, which is not always straightforward but is essential for demonstrating the real impact of training.

Qualitative tools that provide in-depth context to complement quantitative data. Particularly useful for understanding why a program worked or did not work and what specific obstacles participants encountered when trying to apply what they learned.

An effective training evaluation does not happen at a single moment — it is distributed throughout the entire training cycle.

Evaluation starts before the course begins, with a Training Needs Analysis (TNA) that identifies the gap between current competencies and those required for a role or strategic objective. This pre-evaluation defines what will be measured and at what level.

Occurs during the learning process and serves to monitor progress and make real-time adjustments. It includes quizzes, interactive activities, branching scenarios and formative assessments embedded within the course. Its objective is not to qualify but to guide — both the participant and the trainer.

Immediately after training ends: summative evaluation (final exam, certification test, competency assessment). 30–90 days later: transfer evaluation — has the participant applied what they learned on the job? At 6 or 12 months: results evaluation — has the training had an impact on the business KPIs it aimed to improve?

Metrics are the language with which L&D teams communicate the value of training to leadership. These are the six most important ones, with industry reference benchmarks:

Divide the total training cost (including platform, content, facilitation and participant time) by the number of trained employees. Industry benchmark: between $1,000 and $1,500 per employee per year according to the Association for Talent Development (ATD) 2023 State of the Industry report. Significant variation by sector and organization size.

Percentage of enrolled employees who complete the training. Industry benchmark: 58–73% for online courses according to Docebo’s 2023 benchmark. Below 50% usually signals a content, relevance or accessibility problem worth investigating.

Measured through assessments conducted 30, 60 or 90 days after training. Directly related to the Ebbinghaus forgetting curve: without spaced reinforcement, employees forget up to 70% of content within 24 hours. Platforms with microlearning and spaced repetition significantly improve this metric.

Percentage of employees who demonstrably apply what they learned on the job. Measured through 360° assessments, manager observation or specific performance indicators. It is the most strategically valuable metric but also the most complex to capture systematically.

Measured through post-training surveys (NPS, Likert scale). Benchmark: above 4/5 or 80 NPS is considered good. Low satisfaction does not necessarily mean low learning, but it does signal problems with relevance, format or delivery quality.

The most comprehensive metric: compares the economic benefit generated by training with its total cost. The basic formula: ROI (%) = [(Training Net Benefit – Total Cost) / Total Cost] × 100. Recommended for programs with the highest investment or strategic importance.

Implementing a complete training evaluation system does not require tackling everything at once. This is a practical sequence that any L&D team can follow:

Even experienced L&D teams repeat the same evaluation mistakes. These are the most frequent ones:

Having the right technology makes the difference between a reactive and a proactive evaluation strategy. isEazy LMS centralizes all training metrics in real time — completion rates, scores, time spent, dropout points and progress by user, team or location — and its advanced analytics module allows you to create custom reports and track the ROI of each program without manual effort.

isEazy Author allows you to build interactive courses with embedded assessments: quizzes with detailed feedback, branching scenarios, gamified simulations and competency tests. All interactions are automatically recorded and sent to the LMS, so evaluation data is never lost.

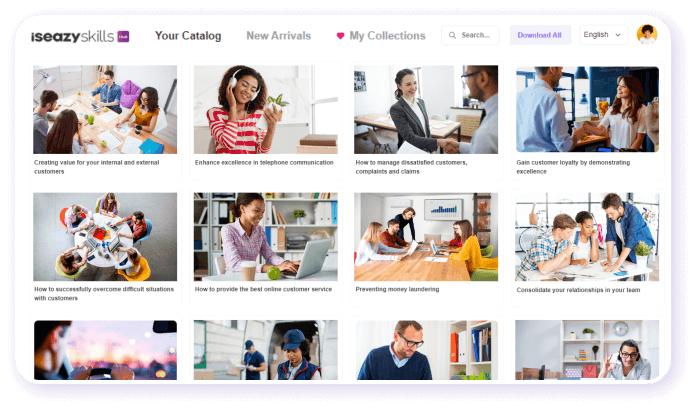

isEazy Skills goes one step further: it maps learning to the specific competencies your organization needs to develop, enabling you to measure not just course completion but actual skills growth over time and its alignment with business objectives.

PreZero is a good example of how a company can transform its internal knowledge into measurable, high-quality e-learning content at scale. With isEazy Author and isEazy LMS, they were able to create training programs and evaluate their effectiveness with real data. Discover how they did it →

Training evaluation is not a bureaucratic requirement — it is the mechanism that transforms training from an expense into a strategic investment. Organizations that evaluate their programs systematically make better decisions: they invest in what works, improve what does not, and can demonstrate the real value of L&D to leadership with objective data.

You do not need to measure everything at once. Start with levels 1 and 2, choose the model that fits your context and progressively build a data-driven evaluation culture. The technology exists to make this process scalable and efficient — the key is to make evaluation an integral part of every training cycle, not an afterthought.

Do you want to know more about the best strategies to optimize your training programs? Visit our guide on learning strategy or explore how isEazy LMS can help you centralize, manage and evaluate all your training with advanced analytics.

Training evaluation is the systematic process of collecting, analyzing, and interpreting information to determine whether a training program has achieved its objectives and what real impact it has had on employee performance and business results. It goes beyond asking whether participants enjoyed the course: it measures the knowledge acquired, behavioral changes on the job, and the return on training investment. According to the LinkedIn Workplace Learning Report 2024, 89% of L&D professionals consider measuring the impact of training a strategic priority, yet fewer than 40% have a structured evaluation process in place.

The Kirkpatrick model is the most widely used evaluation framework in corporate training. It structures evaluation across four levels: Reaction (what did participants think?), Learning (what knowledge or skills did they acquire?), Behavior (did they apply what they learned on the job?), and Results (what impact did it have on business metrics?). Its popularity stems from being intuitive, scalable, and applicable to any type of training program. The main limitation is that levels 3 and 4 — the most valuable ones — are also the most difficult and costly to measure, as they require follow-up over time and access to performance data.

The most relevant metrics for measuring training program effectiveness are: course completion rate (industry benchmark: 58–73% according to Docebo), assessment pass rate, training cost per employee, participant satisfaction measured through post-training surveys, knowledge retention rate at 30–90 days, and training return on investment (ROI). For strategic upskilling or reskilling programs, it is also essential to measure behavioral change on the job (Kirkpatrick level 3): whether the employee actually applies what they learned. An LMS with advanced analytics can automatically track most of these metrics without manual effort.

The difference lies in the timing and purpose. Formative evaluation occurs during the learning process — quizzes, activities, comprehension questions — and its goal is to guide the participant and trainer on progress in real time. Summative evaluation occurs at the end of the program and serves to certify or accredit the level of learning achieved: final exams, application projects, competency assessments. In a complete training evaluation strategy, both are necessary and complementary: formative improves the process as it unfolds, while summative validates the final outcome.

Yes, although with some nuance. Training ROI is calculated by comparing the economic benefit obtained (productivity improvement, error reduction, lower turnover, etc.) with the total program cost. The basic formula is: ROI (%) = [(Net Training Benefit – Total Cost) / Total Cost] × 100. The challenge lies in quantifying the benefit: some impacts, such as improved engagement or leadership development, are difficult to translate into figures. That is why Jack Phillips’ ROI model proposes converting intangible data into monetary values using estimation techniques and control groups. For most L&D teams, measuring the full ROI of every program is not feasible, but it is recommended for programs with the highest investment or strategic impact.

The frequency depends on the type of program and its objectives. As a general rule: reaction evaluation (satisfaction) should be conducted after every training session; learning evaluation, at the end of the program or module; behavioral evaluation (transfer to the job), between 30 and 90 days after training, once the participant has had time to apply what they learned; and results or ROI evaluation, at least once a year for strategic programs. For continuous training programs, it is recommended to establish a quarterly evaluation cycle combining automatic LMS metrics with periodic follow-up surveys.

Explore more than 600 courses and programs to develop your team's talent

Request a demoTry it free